Searching — again! — for your car keys that you casually tossed on top of a pile of papers, books and other materials after a night out? Perhaps you need the help of a newly designed robot that can find objects through the mess by sensing what you and other humans can’t see.

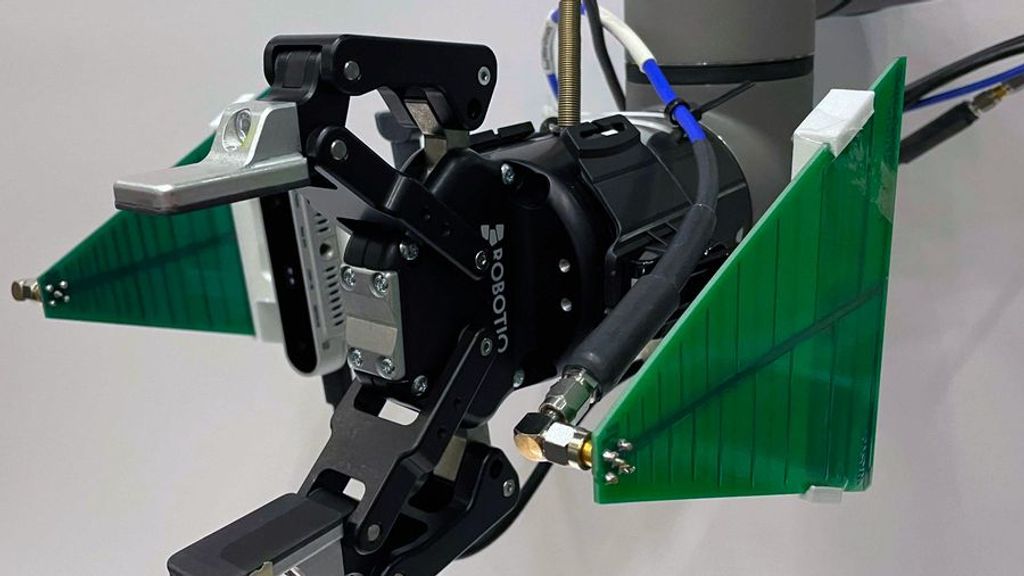

Using a camera alongside an extra sensor, researchers at the Massachusetts Institute of Technology designed a robot that can sense where an object is even if it is hidden under other objects.

“One of the really great parts about the system is it can see through items. It can find items it can’t necessarily find with a camera,” researcher Isaac Perper told Zenger.

For the sensor to find out-of-sight objects, it needs a tag that sends radio signals to the robot to help it determine where the object might be even if it is hidden under other items.

“The [radio frequency] perception module was able to localize the tagged items,” lead author of the research paper Tara Boroushaki told Zenger.

The researchers’ application of the radio signals was built on decades of previous research in the field of radio-frequency technology. Primarily, radio identification tags have been used to send signals to receivers that are capable of tracking where the tag is located, as reported by Ron Weinstein in his 2005 paper outlining radio frequency tags and their uses. The MIT researchers applied this technology to help the robot find the general locations of objects. By including a camera, they were able to improve the robot’s ability to grab items.

The researchers then created a simulated environment needed to teach the robot to quickly find items using both the camera and the radio signals.

“There are a lot of factors that are really hard to modulate and optimize. So what we did was create a simulation environment and make a simulation robot in it,” Boroushaki said.

In the simulation, the robot was rewarded for finding objects, and it was punished for making mistakes such as hitting other objects or going to incorrect places.

“Over time it learns what actions are good … and what actions are bad,” Boroushaki said. “This is also how our brain learns. We get rewarded from our teachers, from our parents, from a computer game, etc. The same thing happens in reinforcement learning.

“We did it in the simulation because it takes thousands, or sometimes millions of trials, for [the robot] to learn. It’s really hard to do it in the actual world because you have to make sure it doesn’t hit anyone or break itself, but you can do it easily in simulation.”

While the robot is still being developed, the researchers predict it will eventually have real-world applications.

“One application is in a warehouse,” Boroushaki said. “A robot could go and find the objects that you requested, put them in your package and then double-check if it put the right item and send it out to you.”

With industrial applications, the robot could improve the efficiency of many companies. However, it also could have a role in households.

“If you’re sick, a robot can take care of your family and clean for you. When it’s time for you to take your medication, it can go and get your medication for you and make sure that you take your medication,” Boroushaki said.

Perper raised the image of a crowded shelf. If there is something on the shelf blocking the medication you are seeking from sight, the robot would still be able to find and grab it by tracking the medication with its sensor.

“It can do these things that cameras alone are incapable of,” Perper said.

“Right now, you can think of this as a Roomba on steroids, but in the near term, this could have a lot of applications in manufacturing and warehouse environments,” said senior study author Fadel Adib, an associate professor in the Department of Electrical Engineering and Computer Science and director of the Signal Kinetics group in the MIT Media Lab.

The researchers said the project will be presented at the 2021 Association for Computing Machinery SenSys Conference in Portugal in November.

In the meantime, the MIT researchers will continue to improve their robot’s efficiency and speed.

“Those are all things that we have to figure out over the next few months,” Perper said.

Edited by Richard Pretorius and Kristen Butler

The post Seeing Through Solids: Robot Uses Sensor To See What Humans Can’t appeared first on Zenger News.